1. ITAv’s Network Orchestrator – NetOr

ITAv’s Network Orchestrator, NetOr, is an OSS/BSS system that operates over the operator’s 5G infrastructures and services, abstracting the complex actions required to deploy inter-domain network services. Furthermore, NetOr should be seen as an improvement over the existent Vertical Service Orchestrators. These existent solutions are limited by a common set of problems, such as lack of standardization, monolithic architectures as well and a low support for interdomain scenarios. Thus, NetOr aims to tackle the deficiencies presented by most of the modern Vertical Service Orchestrators by providing an end-to-end Network Slicing Platform characterized by a service-oriented architecture with increased maintainability, flexibility, and scalability. Finally, in the context of 5GASP, NetOr is responsible for enabling a multi-domain scenario, through the creation of tunnels between each testbed.

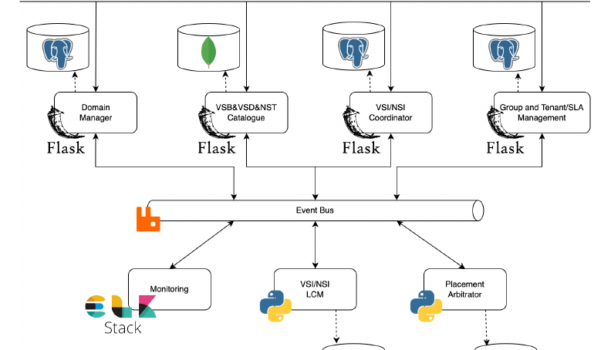

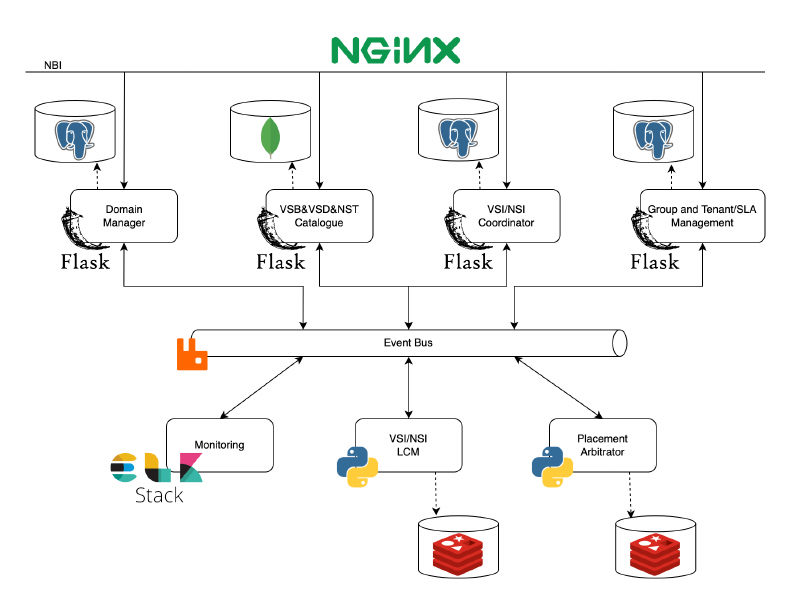

1.1 NetOr’s Architecture

As previously mentioned, NetOr follows an event-driven and micro-service architecture with the intent of providing low-coupling, flexibility, modularity, and scalability. Since this architecture allows parallel processing (due to its asynchronous communications), the execution of service management operations outperforms other existent orchestration systems. NetOr’s architecture is composed of 6 main components:

- Group/Tenant Manager: This component is responsible for handling both the Groups and Tenants of the system, allowing the creation, management, and deletion of both. It serves not only as a persistence service that stores and provides those two entities, but it is also an IdP for the rest of the system.

- Domain Manager: Responsible for managing all domain-related operations. Besides providing CRUD operations over the domains, this entity also handles communications between the entities responsible for managing each domain. It is possible to have multiple drivers and easily add new ones, which makes NetOr technology agnostic, and therefore enables communication with different orchestration technologies, from NFVOs to SDN controllers, if needed.

- VSI/NSI Coordinator: Conducts all operations related to the high-level Vertical Service Instances (VSIs), such as its creation, execution of operations during runtime, termination, and deletion. It is responsible for triggering the VSI orchestration processes for the rest of the system.

- VSI/NSI LCM Manager: This entity manages and coordinates the Vertical Service Instances and their possible sub-components, such as NSIs and NSs. To do so, each VSI originates a new management agent that will handle all operations needed to be made for that vertical service. Consequently, this agent will manage the entire lifecycle of the VSI, guarantying that NetOr always has the most recent information about the vertical service.

- Placement Arbitrator: It processes all information related to Vertical Services, such as blueprints, descriptors, and templates that configure it to generate the placement information needed, defining the deployment location of each sub-component and restrictions. Additionally, it also considers SLAs associated with the tenant and parameters dynamically defined during instantiation. As a product of this processing, this component can also arbitrate over the VSI and its sub-components. To do so, each VSI originates a new agent that will process that information, generate the placement directives, and arbitrate over it, if necessary.

2. How is NetOr being used in 5GASP?

As previously stated, NetOr is the orchestrator used in 5GASP to provide inter-facility connectivity. Through NetOr, we orchestrate and manage a fully-fledged mesh network between all project’s testbeds, providing connectivity between services hosted in different sites.

Currently, 5GASP encompasses an inter-domain mesh network between 4 of its facilities – ITAv’s, UoP’s, OdinS’, and ININ’s testbeds. We aim to integrate the remaining testbeds (Orange Romania and Bristol) until the end of 2022, which will enable end-to-end connectivity between all 5GASP’s sites.

To orchestrate and configure the inter-domain mesh network, we take advantage of Service Discovery mechanisms. It is through these mechanisms that the Wireguard peers deployed at each testbed will know the location of the other peers. Besides, it is also due to Service Discovery that the peers interexchange all information required to create an overlay tunnel between 2 different sites, which will, ultimately, encompass the described mesh network.

Given everything just described, we can affirm that 5GASP is dotted with a fully automated orchestrator to deploy and manage an inter-domain mesh network, connecting all the project’s facilities.

3. Future Work

As future work, we aim to further develop NetOr, making it capable of providing more functionality. To this end, we will work on:

- Monitoring Mechanisms: To monitor the behavior of the inter-domain mesh network. This way, NetOr will know when a Wireguard peer is not behaving as expected. By identifying erroneous behaviors, it can act in order to solve the problems originating them.

- SLA Enforcement: Leveraging the NetOr’s monitoring capabilities, we aim to implement mechanisms to enforce the defined system’s SLA’s. This will result in the increased reliability of our solution.

- Route Dynamic Optimization: To enforce SLAs, we may need to dynamically update the routing of the inter-domain mesh networks. For instance, if one Wireguard peer is offline, we must be able to route the traffic through a different peer, so as not to impair inter-facility connectivity.

- Adoption of Security Mechanisms: Given that the inter-testbed enables full connectivity between different testbeds, one may desire to secure its testbed from the other peer testbeds. This is of utmost importance, since, if a testbed is exploited, the intruders can try to exploit the connected testbeds through the inter-domain tunnels. So, we must deal with this possibility. To do so, we are aiming to additionally deploy a VNF encompassing an Intrusion Prevention and Detection System (IPS/IDS), alongside each of the Wireguard VNFs. This way, each testbed will be able to protect itself from attacks coming from the other testbeds.

4. NetOr’s GitHub Repository

If you want to check NetOr’s code, take a look at its GitHub repository here.